Example setup for Eyelink 1000 Plus, using PsychoPy 3.0.¶

Sample code to run SR Research Eyelink eyetracking system. Code is optimized for the Eyelink 1000 Plus (5.0), but should be compatiable with earlier systems.

Before Running the Participant¶

Import package¶

[ ]:

import imhr

Set task parameters, either directly from PsychoPy or created manually.

[ ]:

from psychopy import visual, core

expInfo = {'condition': 'even', u'participant': '001', 'dominant eye': 'left', 'corrective': 'False'}

subject = expInfo['participant']

dominant_eye = expInfo['dominant eye']

# `psychopy.core.Clock.CountdownTimer` instance

routineTimer = core.CountdownTimer()

# `psychopy.visual.window.Window` instance

window = visual.Window(size=[1920, 1080], fullscr=False, allowGUI=True, units='pix', winType='pyglet', color=[110,110,110], colorSpace='rgb255')

window.flip()

Initialize imhr.eyetracking.Eyelink¶

Note

Before initializing imhr.eyetracking.Eyelink.start_recording(), make sure code is placed after PsychoPy window instance has been created in the experiment file. This window will be used in the calibration function.

[ ]:

# Initialize imhr.eyetracking.run()

eyetracking = imhr.eyetracking.Eyelink(window=window, libraries=True, subject=subject, timer=routineTimer, demo=True)

Connect to the Eyelink Host¶

This controls the parameters to be used when running the eyetracker.

[ ]:

param = eyetracking.connect(calibration_type=13)

Preparing the Participant¶

Set the dominant eye¶

This step is required for recieving gaze coordinates from Eyelink->PsychoPy.

[ ]:

eye_used = eyetracking.set_eye_used(eye=dominant_eye)

Start calibration¶

When calibration has been completed, it returns True.

[ ]:

# start calibration

calibration = eyetracking.calibration()

Note

# Enter the key “o” on the calibration instance. This will begin the task. # The Calibration, Validation, ‘task-start’ events are controlled by the keyboard. # Calibration (“c”), Validation (“v”), task-start (“o”) respectively.

Figure: Sample calibration.

(Optional) Print message to console/terminal¶

Allows printing color coded messages to console/terminal/cmd. This may be useful for debugging issues.

[ ]:

eyetracking.console("eyetracking.calibration() started", "blue")

(Optional) Drift correction¶

This can be done at any point after calibration, including before and after imhr.eyetracking.Eyelink.start_recording() has started. When drift correction has been completed, it returns True.

[ ]:

drift = eyetracking.drift_correction()

Task Starting¶

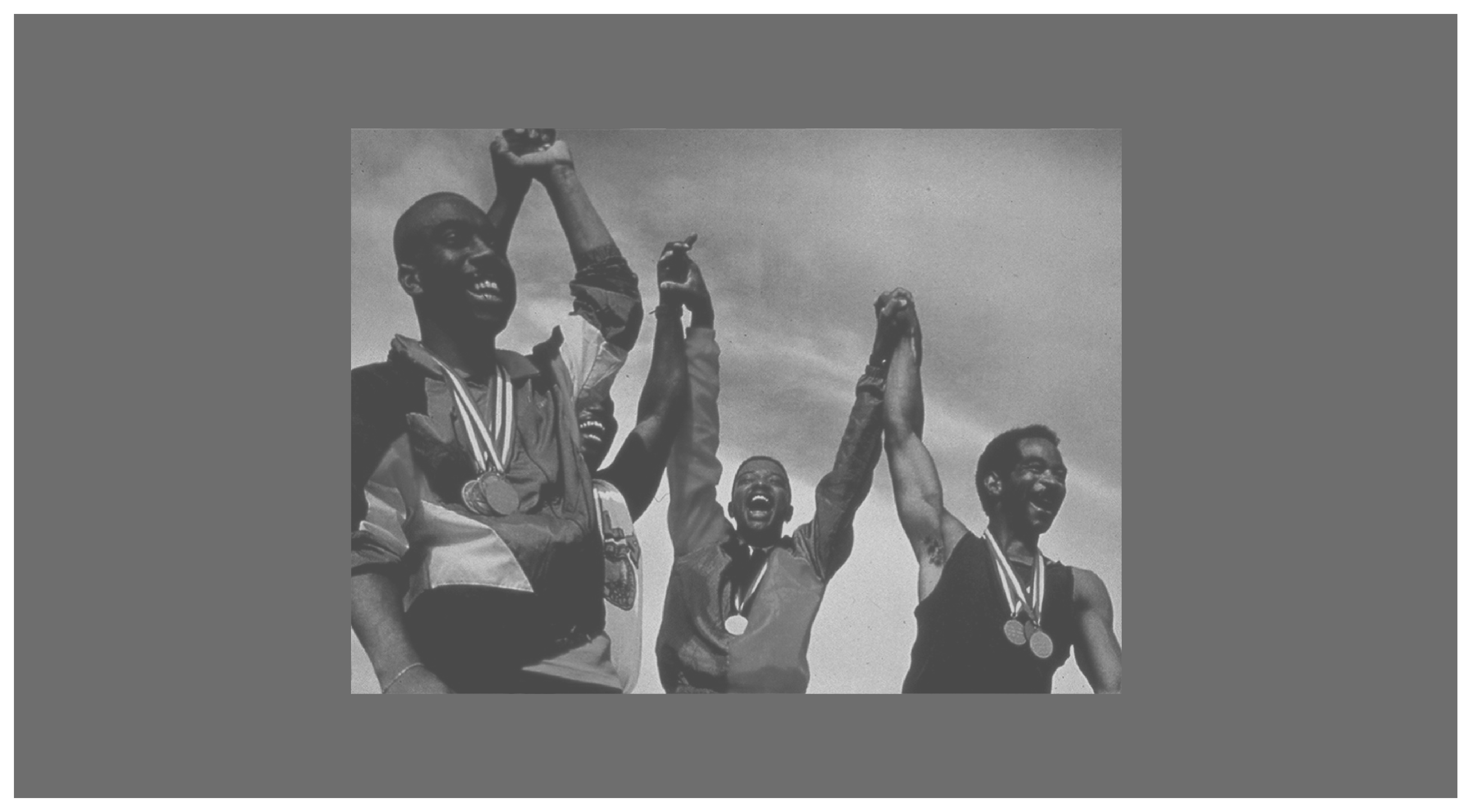

Figure: Sample trial.

Start recording¶

Note

This should be run at the start of the trial. Also, there is an intentional delay of 150 msec to allow the Eyelink to buffer gaze samples that will show up in your data.

[ ]:

# Create stimulus (demonstration purposes only).

filename = "8380.bmp"

path = '%s/dist/stimuli/%s'%(root, filename)

size = (1024, 768) #image size

pos = (window.size[0]/2, window.size[1]/2) #positioning image at center of screen

stimulus = visual.ImageStim(win=window, name="stimulus", units='pix', image=path,

pos=(0,0), size=size, flipHoriz=False, flipVert=False, texRes=128,

interpolate=True, depth=-1.0)

stimulus.setAutoDraw(True)

# Note: PsychoPy requires two filps when displaying a new image. The first to clear the screen and the second to update it.

window.flip()

window.flip()

[ ]:

# start recording

eyetracking.start_recording(trial=1, block=1)

(Optional) Initiate gaze contigent event¶

This is used for realtime data collection from Eyelink->PsychoPy.

[ ]:

# In the example, a participant is required to look at the bounding cross for a duration

# of 2000 msec before continuing the task. If this doesn't happen and a maxinum duration of

# 10000 msec has occured, drift correction will be initiated.

bound = dict(left=448, top=156, right=1472, bottom=924)

eyetracking.gc(bound=bound, t_min=5000, t_max=20000)

(Optional) Collect real-time gaze coordinates from Eyelink¶

Note

Samples need to be collected at an interval of 1000/(sampling rate) msec to prevent oversampling.

[ ]:

gxy, ps, sample = eyetracking.sample() # get gaze coordinates, pupil size, and sample

##### Example use of eyetracking.sample

[ ]:

# In our example, the sampling rate of our device (Eyelink 1000 Plus) is 500Hz.

s1 = 0 # set current time to 0

lgxy = [] # create list of gaze coordinates (demonstration purposes only)

s0 = time.clock() # initial timestamp

# repeat

while True:

# if difference between starting and current time is greater than > 2.01 msec, collect new sample

diff = (s1 - s0)

if diff >= .00201:

gxy, ps, sample = eyetracking.sample() # get gaze coordinates, pupil size, and sample

lgxy.append(gxy) # store in list (not required; demonstration purposes only)

s0 = time.clock() # update starting time

#else set current time

else:

s1 = time.clock()

#break `while` statement if list of gaze coordiantes >= 20 (not required; demonstration purposes only)

if len(lgxy) >= 200: break

(Optional) Send messages to Eyelink¶

This allows post-hoc processing of event markers (i.e. “stimulus onset”).

[ ]:

# Sending message "stimulus onset".

eyetracking.send_message(msg="stimulus onset")

Task Ending¶

Stop recording¶

Also (optional) provides trial-level variables to Eyelink.

Note

Variables sent are optional. If they being included, they must be in dict format.

[ ]:

# Prepare variables to be sent to Eyelink

variables = dict(stimulus=filename, trial_type='encoding', race="black")

# Stop recording

eyetracking.stop_recording(trial=1, block=1, variables=variables)

Finish recording¶

[ ]:

eyetracking.finish_recording()

Close PsychoPy¶

[ ]:

window.close()